Now that the dust has finally settled on Microsoft's big HoloLens 2 announcement, the company is circling back to offer more granular detail on some aspects of the device we still don't know about.

Aside from making some team members accessible for interviews, the company has also launched a video streaming series that delves into the intricacies of the HoloLens 2 and allows users and developers to ask more questions. The first episode aired on Tuesday, and added a few important new details to the HoloLens 2 story.

The stream was hosted by Daniel Escudero, a senior technical designer for the Mixed Reality Academy, and he was joined by Nick Klingensmith, a lead engineer for the Mixed Reality Academy, and Jesse McCullough, a veteran HoloLens developer who was recently hired as a program manager on the developer ecosystem team for Microsoft's HoloLens.

Hand Tracking & Interface Interaction

Most of the initial discussion was devoted to explaining more about what we've already seen demoed on stage, or, in my case, in person with the HoloLens 2 team at Mobile World Congress. For example, hand tracking and virtual object manipulation on the HoloLens 2 is particularly impressive compared to the first HoloLens.

At one point, McCullough admits (and I agree) that, "There are a million wrong ways to air tap [on the first HoloLens] and about three right ways." It's true, the various finger gestures needed to interact with the first HoloLens were unwieldy and difficult to master for some. The new hand tracking system is a massive leap forward and makes controlling virtual objects a breeze because the system mirrors up to 25 joints in the human hand, allowing you to execute various multi-hand interactions with amazing specificity.

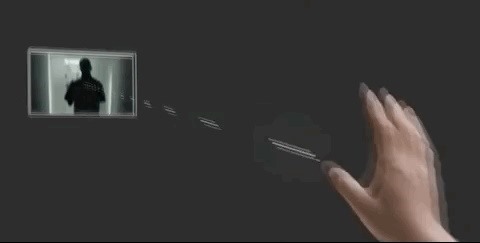

During my testing of the HoloLens 2, I put that hand tracking to the test, and did everything I could to make it fail, and it held up spectacularly. In Microsoft's new video stream, the team showed off a few animations that accurately depict what it's like to control virtual objects using the HoloLens 2.

Noise Canceling Mic Benefits & Biometric Security Concerns

Another feature highlighted during the session was the device's noise-canceling mic, which uses a five channel microphone array. The mic in the HoloLens 2 allows the user to seamlessly speak commands that bring up text or initiate actions, all while you're also interacting with virtual interfaces with your hands. This noise-canceling feature is vital, particularly for enterprise businesses using the HoloLens 2 on a noisy factory floor or in a busy warehouse or manufacturing environment.

"The new mics have a great ability to pick out your voice from the environment around you. I think the number that I saw was 90 decibels. So it can hear you even when there's about 90 decibels of ambient audio around you," said Klingensmith.

"This was kind of like a problem for the HoloLens 1 if you had it in a demo situation or conference floor, you couldn't rely on the voice recognition, and so most people just kind of crossed it off of their list like, 'Okay, we're not gonna rely on this audio stuff.' So now [HoloLens 2] picks out your voice. It can tell, even with an immensely loud noise next to you, so you can actually rely on at this time. So I'm really looking forward to seeing what people do with like some of the the speech services like speech recognition."

Another key feature of the HoloLens 2 is its eye tracking and biometric authentication abilities. And while that's incredibly convenient, for security-minded users, the idea that developers might have access to such sensitive biometric data is a concern. During the question and answer session of the broadcast, the team addressed those concerns.

"Developers will be able to access eye tracking information and tell where a user is looking at," said McCullough. "I think you'll be able to tap into the Windows Hello API (Windows Hello is Microsoft's biometric sign-in system in Windows 10), but I don't think you'll be able to access the data from the camera while it's doing that [authentication]."

Old HoloLens Apps on HoloLens 2

As for those looking to port old HoloLens apps over to the new HoloLens 2, the team also outlined how that can be achieved.

"[HoloLens 2] won't natively run a Hololens 1 app because HoloLens 1 is built on an x86 [Intel processor] architecture, and HoloLens 2 is ARM based. So anybody who has a HoloLens 1 app that they want to move over to run on a HoloLens 2 will have to move up to a more recent version of Unity," said McCullough.

"We recommend that they start implementing MRTK v2, and then they will have to compile for ARM. So not only will they have to compile their own app for ARM, but if they have any third-party libraries, or any of their own libraries, they'll have to either compile them themselves, or find an ARM compatible version. And then once you do all three of those, and you get past any errors that happen in there, and resolve those, then you can start working on actually adding in the new interaction model."

Azure Spatial Anchors vs. Anchor Sharing/World Anchors

Finally, one of the most important aspects of using the HoloLens 2 in our new cloud-enabled world is the ability to share and work with persistent virtual content.

For HoloLens developers already acquainted with Anchors Sharing, which allowed HoloLens developers to save a World Anchor on a device and share it with another HoloLens user, the team offered some perspective on how the new Azure Spatial Anchors work to make sharing and working with persistent augmented reality content easier across the cloud ecosystem.

"We double down on [the previous anchor concept] with Azure Spatial Anchors in that we've made it a cloud-based service that you can attach the same anchors to, and it's multi-platform so you can use it across HoloLens, ARCore, ARKit, and hopefully future platforms to come," said McCullough.

"But it allows you to do the same thing, you can place an object in a particular physical environment and ask it to be remembered and that data syncs up to the cloud. And then, if somebody were to come by later with another HoloLens using your app, they'd be able to pull that cloud anchor down and see the same thing you left there. Or if they come by with a phone or tablet that's running ARKit or ARCore in your app, same thing."

This is just the first in a series of video stream sessions that will continue to shed more light on how end users and developers can get the most out of the HoloLens 2, which is scheduled to be released later this year. If all this sounds too good to pass up, check here to find out how to pre-order your own device.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts