HoloLens Development Guides

HoloLens Dev 101: Building a Dynamic User Interface, Part 10 (Scaling Objects)

An incorrectly scaled object in your HoloLens app can make or break your project, so it's important to get scaling in Unity down, such as working with uniform and non-uniform factors, before moving onto to other aspects of your app.

HoloLens Dev 101: Building a Dynamic User Interface, Part 9 (Moving Objects)

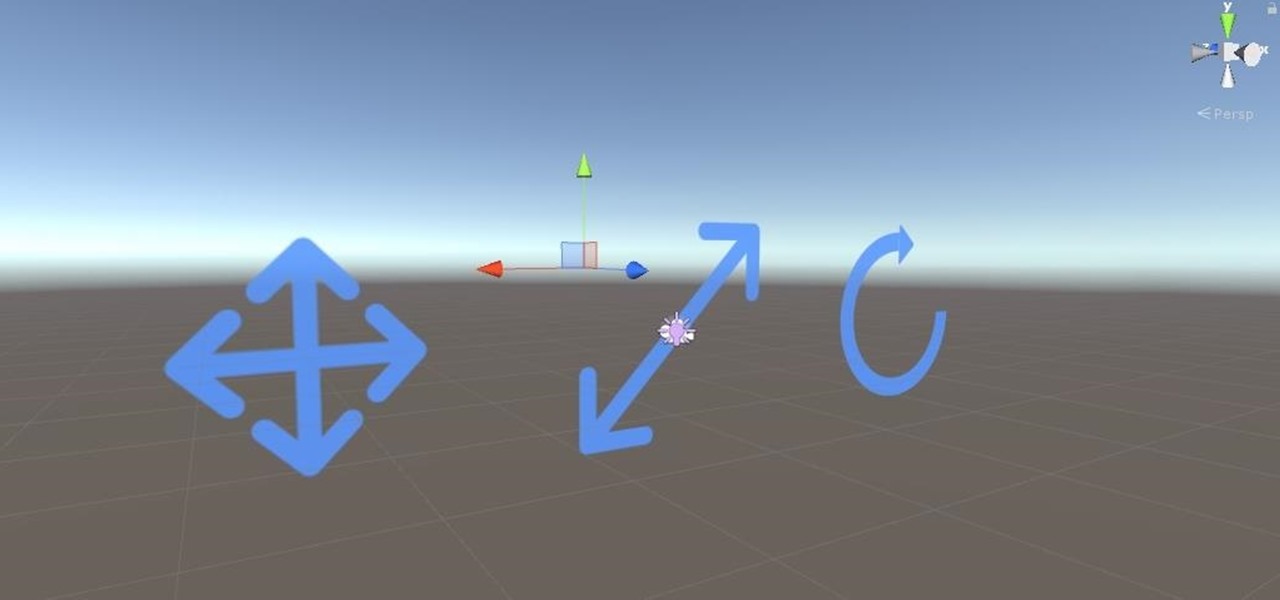

So after setting everything up, creating the system, working with focus and gaze, creating our bounding box and UI elements, unlocking the menu movement, as well as jumping through hoops refactoring a few parts of the system itself, we have finally made it to the point in our series on dynamic user interfaces for HoloLens where we get some real interaction.

HoloLens Dev 101: Building a Dynamic User Interface, Part 8 (Raycasting & the Gaze Manager)

Now that we have unlocked the menu movement — which is working very smoothly — we now have to get to work on the gaze manager, but first, we have to make a course correction.

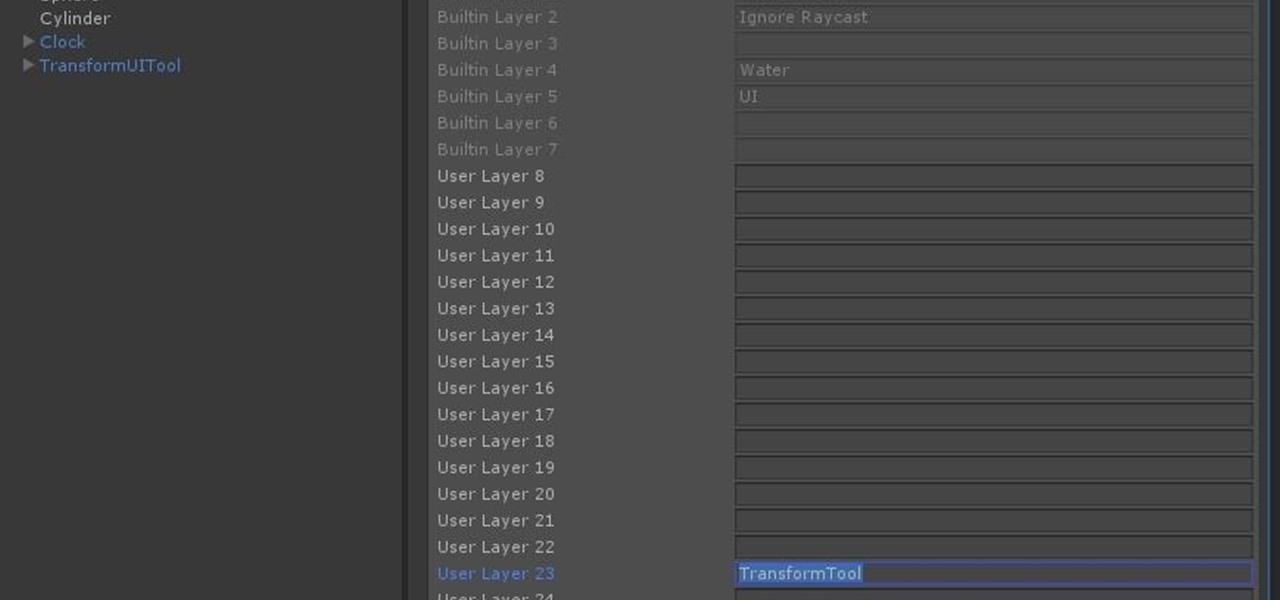

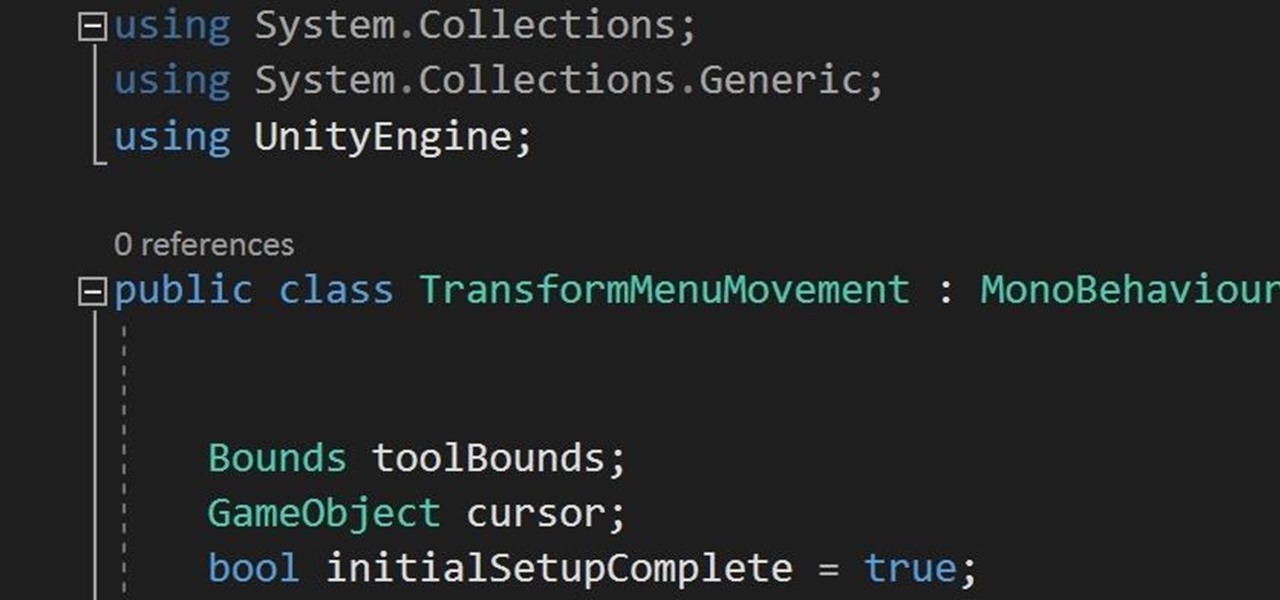

HoloLens Dev 101: Building a Dynamic User Interface, Part 7 (Unlocking the Menu Movement)

In the previous section of this series on dynamic user interfaces for HoloLens, we learned about delegates and events. At the same time we used those delegates and events to not only attach our menu system to the users gaze, but also to enable and disable the menu based on certain conditions. Now let's take that knowledge and build on it to make our menu system a bit more comfortable.

HoloLens Dev 101: Building a Dynamic User Interface, Part 6 (Delegates & Events)

In this chapter, we want to start seeing some real progress in our dynamic user interface. To do that, we will have our newly crafted toolset from the previous chapter appear where we are looking when we are looking at an object. To accomplish this we will be using a very useful part of the C# language: delegates and events.

HoloLens Dev 101: Building a Dynamic User Interface, Part 5 (Building the UI Elements)

Alright, calm down and take a breath! I know the object creation chapter was a lot of code. I will give you all a slight reprieve; this section should be a nice and simple, at least in comparison.

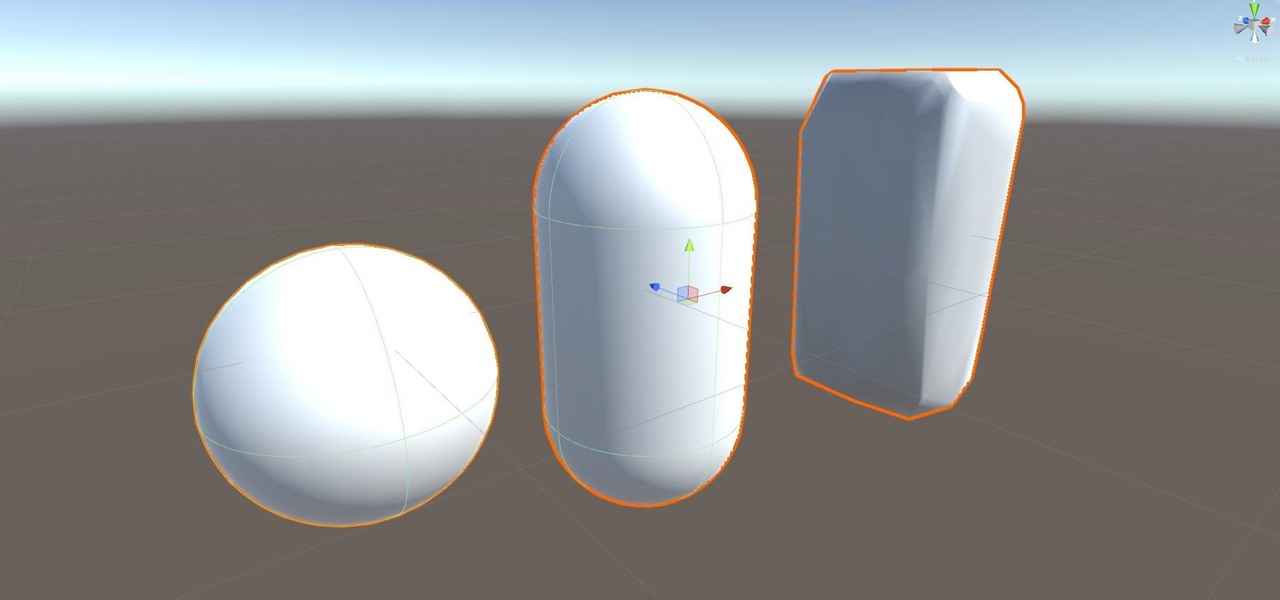

HoloLens Dev 101: Building a Dynamic User Interface, Part 4 (Creating Objects from Code)

After previously learning how to make the material of an object change with the focus of an object, we will build on that knowledge by adding new objects through code. We will accomplish this by creating our bounding box, which in the end is not actually a box, as you will see.

HoloLens Dev 101: Building a Dynamic User Interface, Part 3 (Focus & Materials)

We started with our system manager in the previous lesson in our series on building dynamic user interfaces, but to get there, aside from the actual transform, rotation, and scaling objects, we need to make objects out of code in multiple ways, establish delegates and events, and use the surface of an object to inform our toolset placement.

News: Microsoft HoloLens 2 & Unity Used to Highlight the Threat to Endangered Whales at the Smithsonian

Although the enterprise use cases for the Microsoft HoloLens 2 continue to impress, the arts community just can't stay away from the best augmented reality headset on the market.

News: Microsoft Emerges from the Trenches with More Details Behind the Army Edition of HoloLens 2

When we got our first look at US Army soldiers testing Microsoft's modified HoloLens 2 last year, it still looked very much like the commercial edition, with some additional sensors attached.

News: Transform Your Friend into an AR Musical Synthesizer via This HoloLens 2 NFT App

One of the oldest electronic musical instruments is the theremin, a synthesizer that generates sound based on hand gestures, as featured in the classic "Good Vibrations" by the Beach Boys.

News: Japan-Based Developer Unleashes Giant AR Gundam Robot on the Public via HoloLens 2

The future of the HoloLens 2, according to Microsoft, is all about enterprise use cases. But that doesn't mean some of the more creative-minded HoloLens developers won't bend the top-tier augmented reality device to their own designs. The latest example of this trend comes from Japan.

HoloLens Dev 101: Building a Dynamic User Interface, Part 11 (Rotating Objects)

Continuing our series on building a dynamic user interface for the HoloLens, this guide will show how to rotate the objects that we already created and moved and scaled in previous lessons.

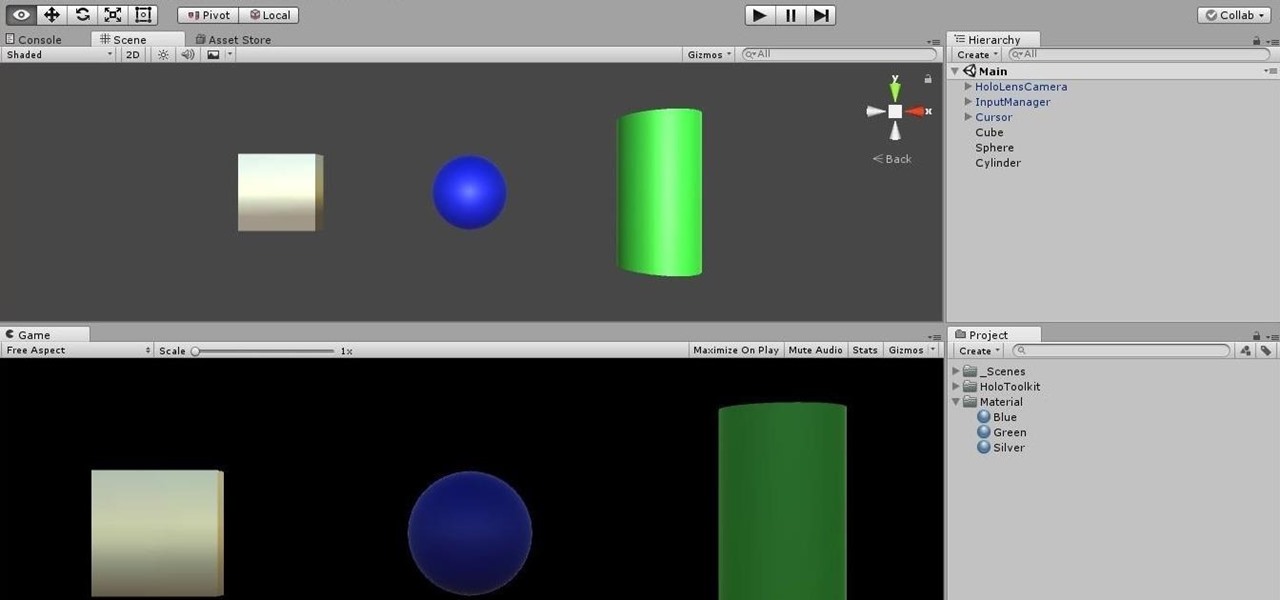

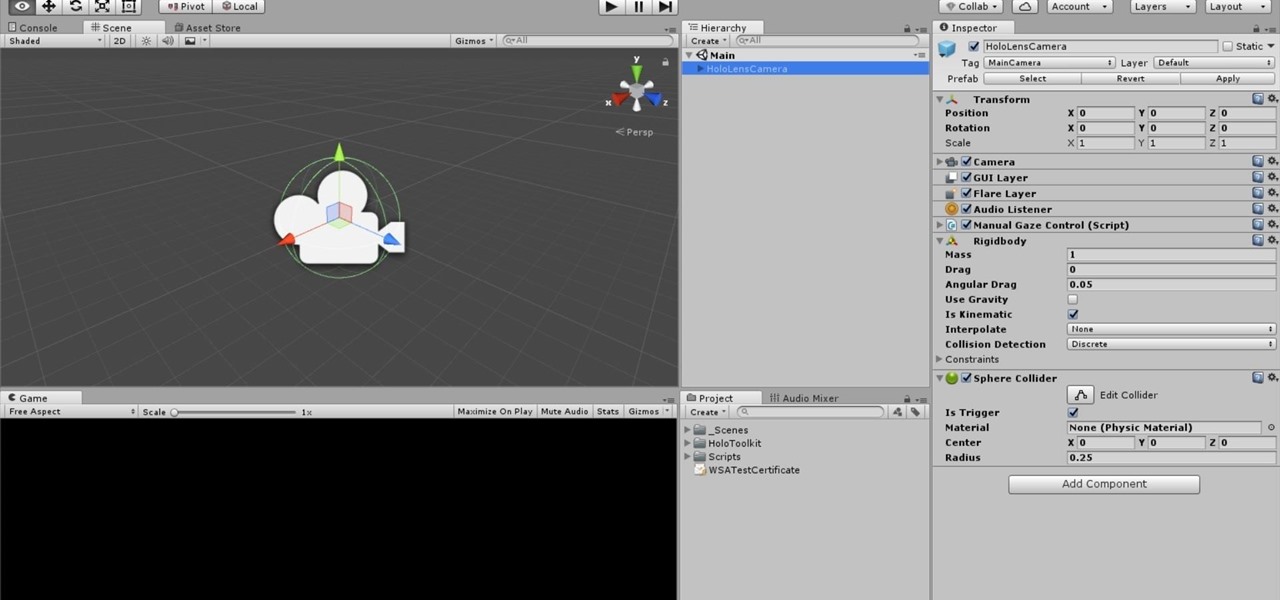

HoloLens Dev 101: Building a Dynamic User Interface, Part 2 (The System Manager)

Now that we have installed the toolkit, set up our prefabs, and prepared Unity for export to HoloLens, we can proceed with the fun stuff involved in building a dynamic user interface. In this section, we will build the system manager.

HoloLens Dev 101: Building a Dynamic User Interface

Generally speaking, in terms of modern devices, the more simple you make an interface to navigate, the more successful the product is.

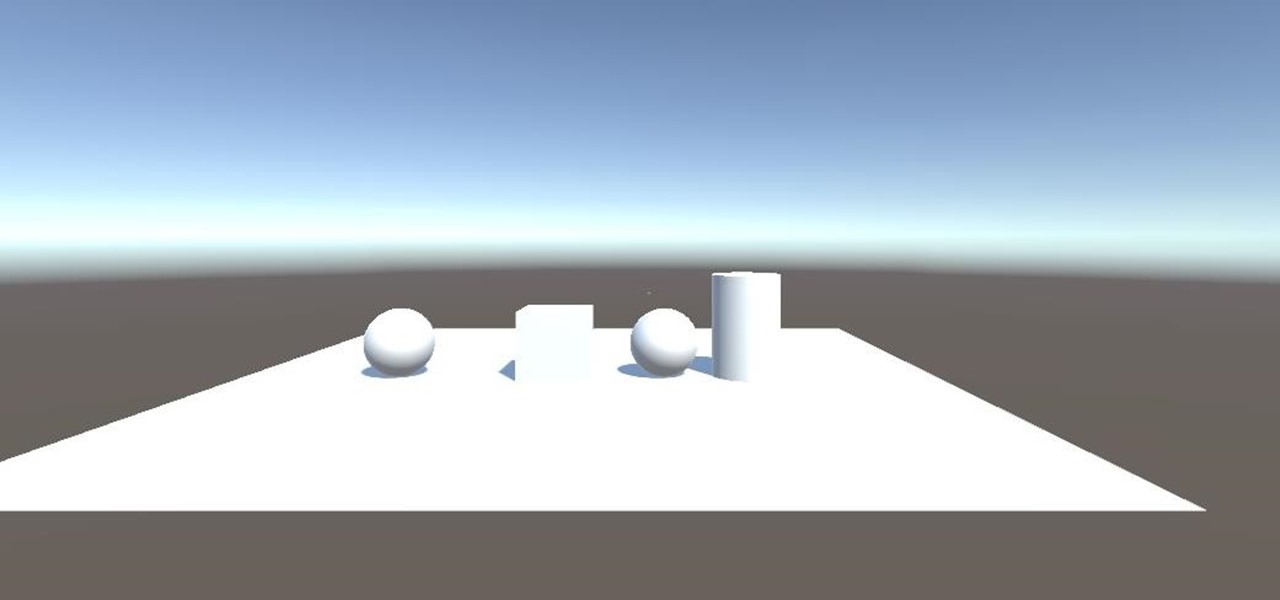

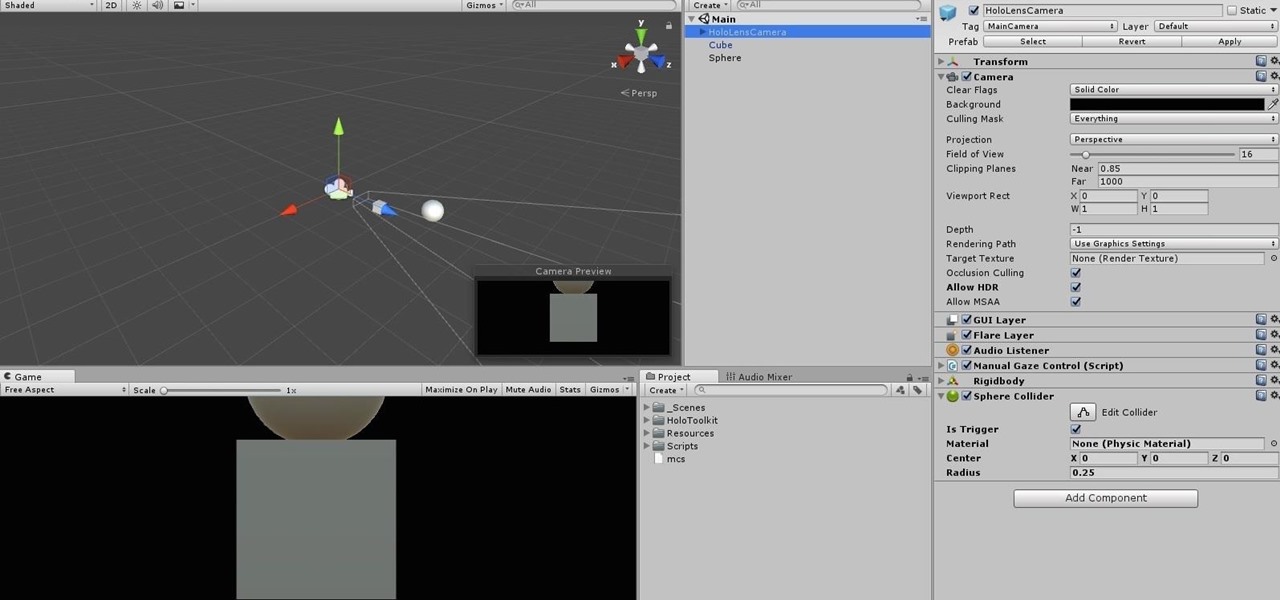

HoloLens Dev 101: Building a Dynamic User Interface, Part 1 (Setup)

Alright, let's dig into this and get the simple stuff out of the way. We have a journey ahead of us. A rather long journey at that. We will learn topics ranging from creating object filtering systems to help us tell when a new object has come into a scene to building and texturing objects from code.

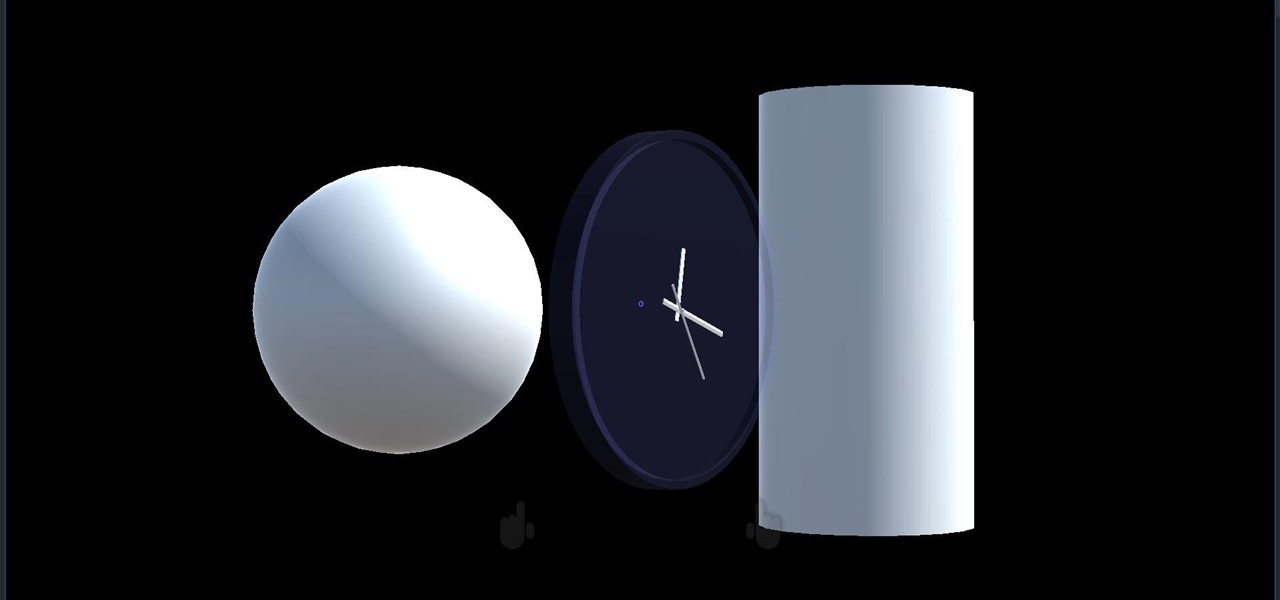

HoloLens Dev 101: How to Get Started with Unity's New Video Player

The release of Unity 5.6 brought with it several great enhancements. One of those enhancements is the new Video Player component. This addition allows for adding videos to your scenes quickly and with plenty of flexibility. Whether you are looking to simply add a video to a plane, or get creative and build a world layered with videos on 3D objects, Unity 5.6 has your back.

HoloLens Dev 101: How to Use Coroutines to Add Effects to Holograms

Being part of the wild frontier is amazing. It doesn't take much to blow minds of first time mixed reality users — merely placing a canned hologram in the room is enough. However, once that childlike wonder fades, we need to add more substance to create lasting impressions.

HoloLens Dev 101: How to Explore the Power of Spatial Audio Using Hotspots

When making a convincing mixed reality experience, audio consideration is a must. Great audio can transport the HoloLens wearer to another place or time, help navigate 3D interfaces, or blur the lines of what is real and what is a hologram. Using a location-based trigger (hotspot), we will dial up a fun example of how well spatial sound works with the HoloLens.

HoloLens Dev 101: How to Create User Location Hotspots to Trigger Events with the HoloLens

One of the truly beautiful things about the HoloLens is its completely untethered, the-world-is-your-oyster freedom. This, paired with the ability to view your real surroundings while wearing the device, allows for some incredibly interesting uses. One particular use is triggering events when a user enters a specific location in a physical space. Think of it as a futuristic automatic door.